The batch reader by default has a limit set for 20,971,520 bytes, after which will handle the events and cause enqueuing of events. Splunk by default when forwarding large events/logs, they will stop using the tailreader for data ingestion, and will pass the event/log to the batch reader for forwarding. Hi the maxKBps value has been set to zero.įurther, a case was opened with support and it was shared with them that we were getting lots of Enqueuing a very large file with bytes_to_read events and they suggested to increase the value to a higher value or larger than the file size as a workaround that would help out with the delays issue. Thank you for your time and advice, much appreciated. Is there a way to avoid re-indexing/duplication of the events (if my understanding is correct and this scenario can occur). To add here, the directory contains multiple log files for each hour of the day as and when events received from the network devices. What I'm most curious and apprehensive about with the option (b) is if another UF is installed on the syslog server and tries to monitor a separate directory that was previously being monitored by the existing UF then how do we avoid re-indexing/duplication of events? Since, there were some events that were already onboarded from that directory by the existing UF and due to the delay the remaining were not yet onboarded while we disabled monitoring for the existing UF and enabled the new UF to monitor that directory. However, the delay is still there.Ĭoming to your two options, (a) running multiple syslog servers with UF to distribute load - that is being considered however might take time so I'm thinking of trying out your second option (b) running multiple (2) UF on a single syslog server with each UF monitoring different/separate directories. Hi update, we have been trying out different options such as increasing the parsingQueue from10 MB to about 100 MB as per the conversations with support. Would appreciate any helpful advice on how to go about addressing this issue.

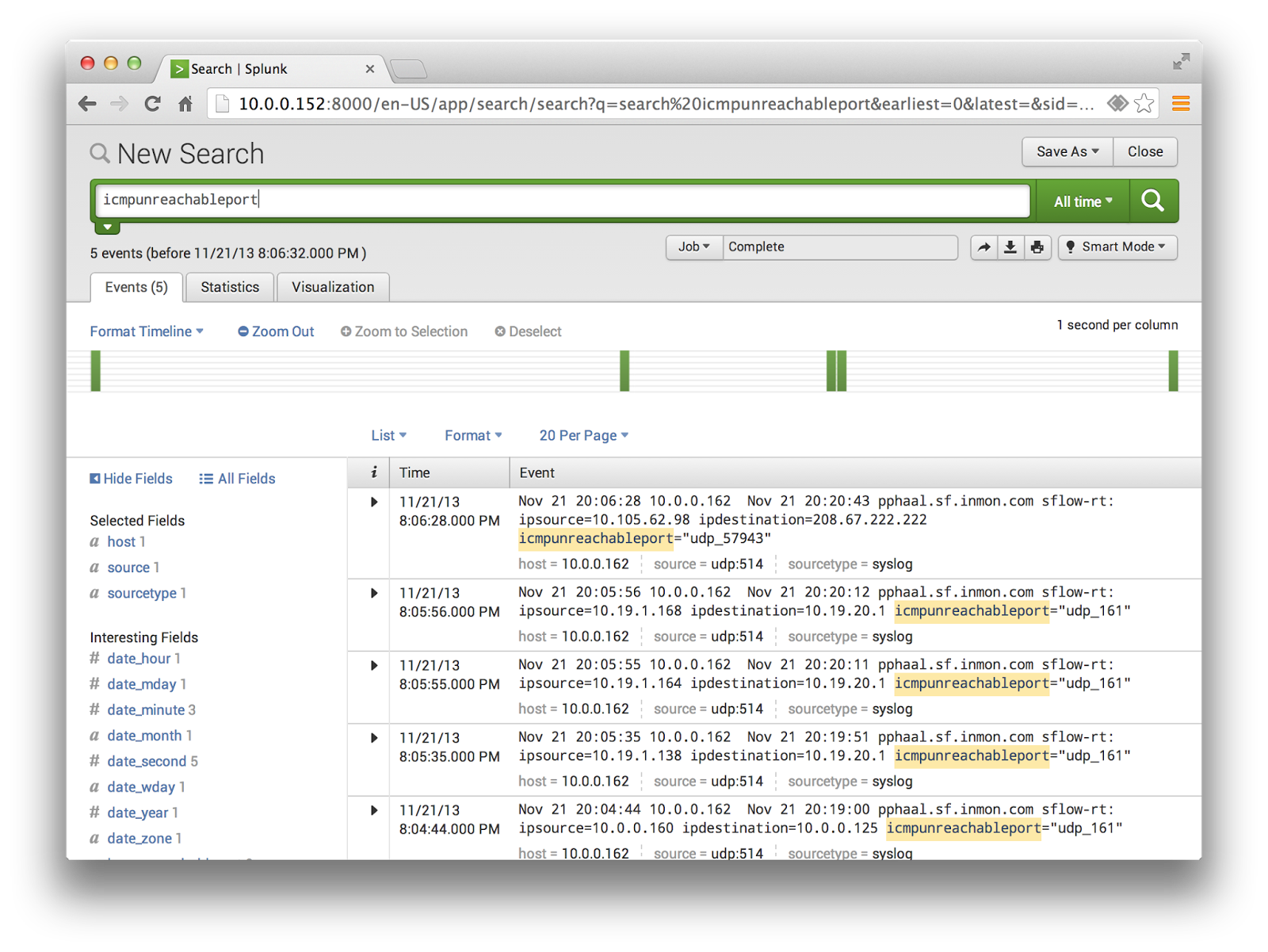

However in some cases, the logs are missed because it seems by the time the Splunk agent reaches the log file to read 2 days have passed so that the log file is no longer available as per retention policies. There are many dips in logs received in Splunk when investigating and usually these dips recover as soon as we start receiving the logs that are sent by Splunk UF. The effect of this is that even though Logs are being forwarded to Splunk but there is a large delay time associated with when the logs are received on Syslog Server and when they are indexed/searchable in Splunk. in the batch reader, with bytes_to_read=xxxxxxx, reading of other large files could be delayed WARN Tail Reader - Enqueuing a very large file=/logs/. However, even now we are getting many events in splunkd.log of

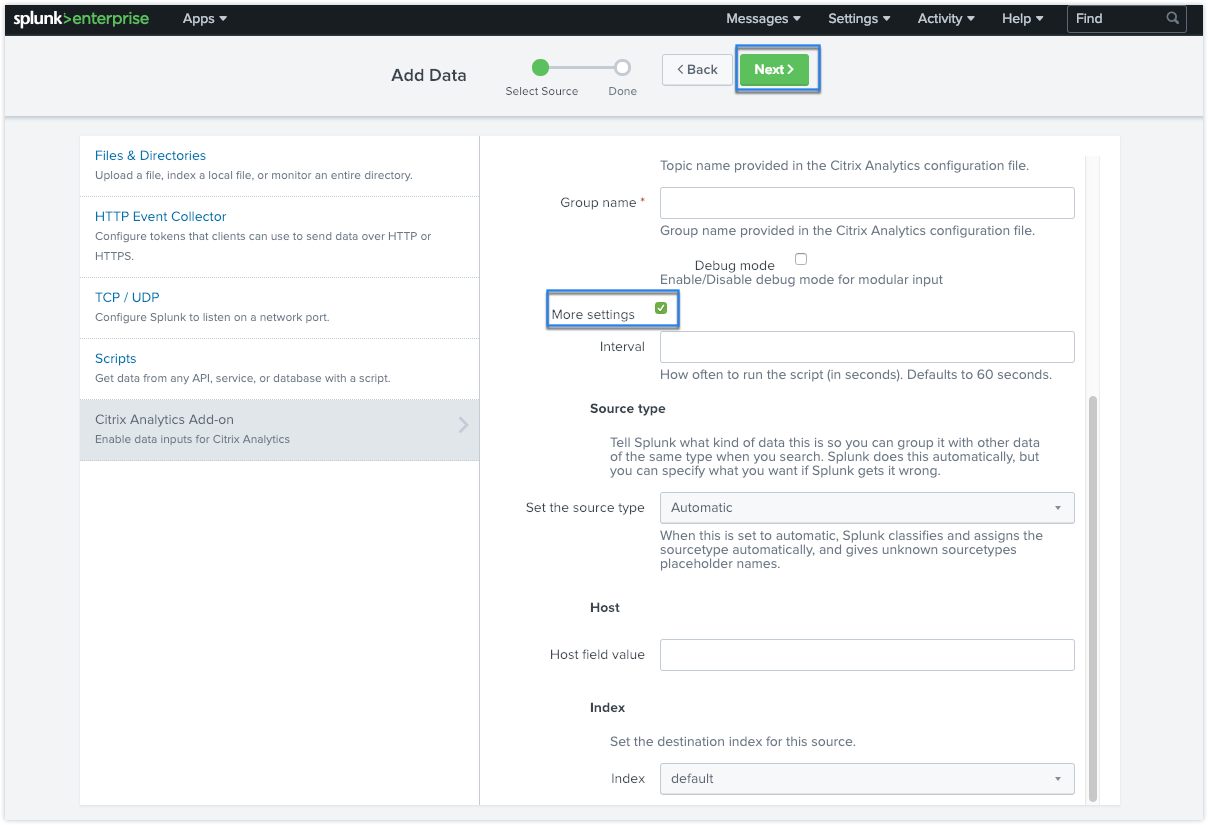

To onboard the logs into Splunk we have deployed a Universal Forwarder on the Syslog Server and configured the below to optimize it, We have configured retention policies such that only 2 days of Logs are kept locally on the Syslog Server. The average size of daily log volume on the Syslog Server is about 800 GB to 1 TB approx. We have a central Syslog Server (Linux Based) that is handling all network devices Logs and storing them locally.

I would like some advice on how to best resolve my current issue with regards to the Logs being delayed that are being sent from Syslog Server via a Universal Forwarder.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed